Computer Vision Projects

All Projects were implemented as part of the Computer Vision Course (16720) at Carnegie Mellon University

1. Spatial Pyramid Matching for Scene Classification

Given an image, the program can determine the scene where it was taken. This Image representation is built on the bag of visual words approach and using spatial pyramid matching for classifying the scene categories.

An illustrative overview of the process used to build the scene classification system is shown below.

2. Augmented Reality (AR) with Planar Homographies

Implemented an AR application using planar homographies. After finding point correspondences between the two Images by implementing a BRIEF descriptor, a homography was estimated between the Images. Using this homography, the Images were warped and the AR application was implemented.

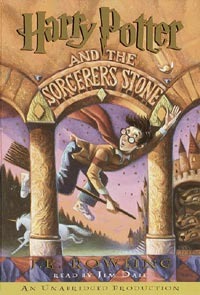

To test the Planar Homography, a homography was computed between the textbook cover and the textbook on the table, after which the Harry Potter Image was warped and overlaid on the textbook.

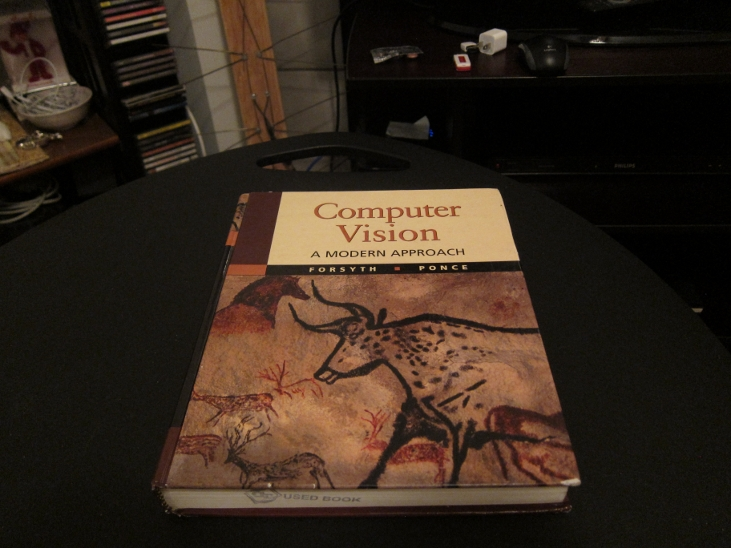

Textbook Cover

Textbook Image

The BRIEF descriptor provided the corresponding matching points which were used to compute the homography.

After which the HP Cover was warped and overlaid onto the Textbook Image to produce the following result.

Now that the planar homographies worked well, after some parameter tuning, it was time to implement the AR application. The goal was to overlay the following videos by computing homographies frame by frame. The resultant video can be downloaded below or viewed via the following link.

3. Lucas-Kanade Tracking

I implemented the Lucas Kanade method which is a widely used method in tracking by estimating optical flow.

The first tracker tracks a particular template that is moving in the scene. This was implemented as a two dimensional tracking problem with a pure translation warp function, also accounting for the template drifting problem. The results can be seen in the two videos below.

The second tracker estimates the dominant affine motion in a sequence of images and subsequently identifies pixels corresponding to moving objects in the scene, which consists of aerial views of moving objects from a non-stationary camera. The results can be seen in the two videos below, one tracking the motion of ants, and the other of moving vehicles.

Finally the inverse compositional extension of the Lucas-Kanade algorithm was implemented and to speed up the efficiency of the algorithm for more efficient tracking.

4. 3D Reconstruction

The goal is to create a 3D reconstruction of an object given two different images of the object.

After finding corresponding points in two images, the Fundamental Matrix is estimated using the eight point algorithm. With the fundamental matrix and the calibrated camera intrinsics, the Essential Matrix is computed. This is then used to compute a 3D metric reconstruction from 2D correspondences using triangulation.

I then implement a method to automatically match points taking advantage of the epipolar constrains making a 3D visualization of the results. In the following figure, we see that the selected points on the left figure correspond to the points on the epipolar line on the right figure

The 3D visualization point cloud of the object is shown in the figure below.

5. Neural Networks for Recognition

Implemented a fully connected Neural network that can recognize handwritten letters in an image using the NIST36 dataset to a test accuracy of around 76%.

Given an image with some text, the first step was to create a function that returns each character in the image by drawing a bounding box around them as shown in the images below.

This was achieved with the following steps:

1. Processing the image with blurring, thresholding, opening morphology to classify all pixels as being part of a character or background.

2. Find connected groups of character pixels, and place a bounding box around each.

3. Group the letters based on the line of the text they are part of, and sort each group.

4. Take each box, resize to the input size of the network and classify with the network.

The output of the trained network is shown in the figure below, which turned out pretty well, although misclassifying some characters.

The training and validation accuracy of the network is shown in the plot below.

Image Compression with Autoencoders:

As part of this exercise, I explored the functions of an autoencoder. An autoencoder is a neural network that is trained to attempt to copy its input to its output, but only approximately. This is a useful way of learning compressed representations. By changing the activation function in the network used for the above task and adding momentum for speed, the following autoencoder output was achieved.

The average PSNR (Peak Signal-to-noise Ratio) from the autoencoder across all the images was 15.68

To improve the performance of the task of classifying handwritten letters in an image, I also implemented a convolutional neural network with PyTorch on the same NIST36 dataset. The performance of the CNN was significantly better with a validation accuracy of 90.2%, a lot higher than the 76% accuracy achieved in the fully connected network. The accuracy plot for the CNN is shown in the figure below.

6. Photometric Stereo

Photometric stereo is a computational imaging method to determine the shape of an object from its appearance under a set of lighting directions. In this implementation, the assumption that the object is Lambertian and is imaged with an orthographic camera is made.

Rendering the n-dot-l lighting

To render the n-dot-l lighting, consider a fully reflective Lambertian sphere with it's center at the origin, and an orthographic camera located away from it as shown in the figure below.

With the given resolution of the camera, and three different incoming lighting directions, I simulate the appearance of the sphere under the n-dot-l model as shown in the figures below.

Inverting the image formation model: Now armed with the rendering knowledge, I invert the image formation process given the lighting directions. Seven images of a face lit from different directions are given, with the ground-truth directions of light sources. Some of the images are shown below.

1. Calibrated Photometric Stereo - Lighting directions are given

Using the given information we can find the reflectance of the surface or albedo, which indicates how much light that hits a surface is reflected without being absorbed and we can also find the surface normals, which encodes the depth information of the surface. The albedo image (left) and surface normal image (right) is shown below. The surface normal image is displayed in two colors as a false color map.

We then estimate the depth from normals to get the shape of the face, as the normals represent the derivatives of the depth map, we integrate using a special case of the Frankot-Chellappa algorithm to enforce integrability. This results in the following representation.

2. Uncalibrated Photometric Stereo: No given lighting direction, just the face images.

Now we estimate the shape of the face directly from the images, without using the lighting directions. Here since we do not know the lighting directions, we estimate the directions by decomposing the existing matrix using SVD. We therefore run into a linear ambiguity, popularly know as the Bas Relief Ambiguity, or Low Relief.

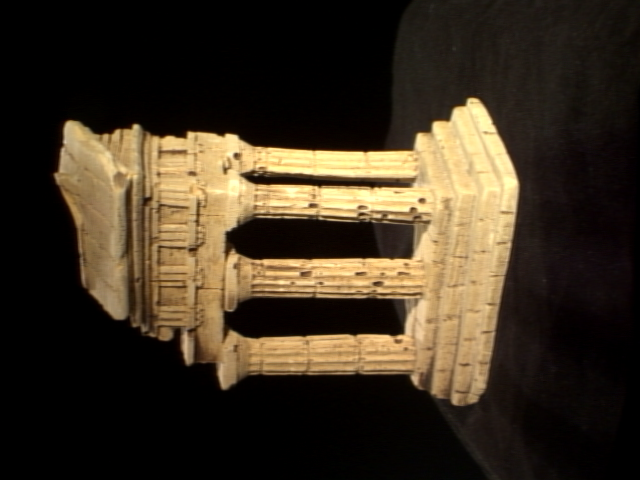

Bas relief has been used to create flattened statues protruding from walls since ancient times, which when viewed from a particular angle, makes it difficult to distinguish from full sized statues, as the depth information looks the same. There exists a whole set of transformations, termed "generalized bas-relief transformations", for which this is true.

After enforcing integrability using the Frankot-Chellapa algorithm, we end up with a very similar reconstruction as above. This is shown in the images below.

However, the ambiguity is only resolved up to a matrix of the form,

This means that for any of the parameters greater than zero, there is a set of integrable pseudonormals (albedo and normals) producing the same appearance.

Examples of varying the parameters are shown below. In some cases, when viewed from a particular angle, it produces the same appearance as the original depth reconstruction.